README

MELD: A Multimodal Multi-Party Dataset for Emotion Recognition in Conversation

Note

🔥 If you are interested in IQ testing LLMs, check out our new work: AlgoPuzzleVQA

:fire: We have released the visual features extracted using Resnet - https://github.com/declare-lab/MM-Align

:fire: :fire: :fire: For updated baselines please visit this link: conv-emotion

:fire: :fire: :fire: For downloading the data use wget:

wget http://web.eecs.umich.edu/~mihalcea/downloads/MELD.Raw.tar.gz

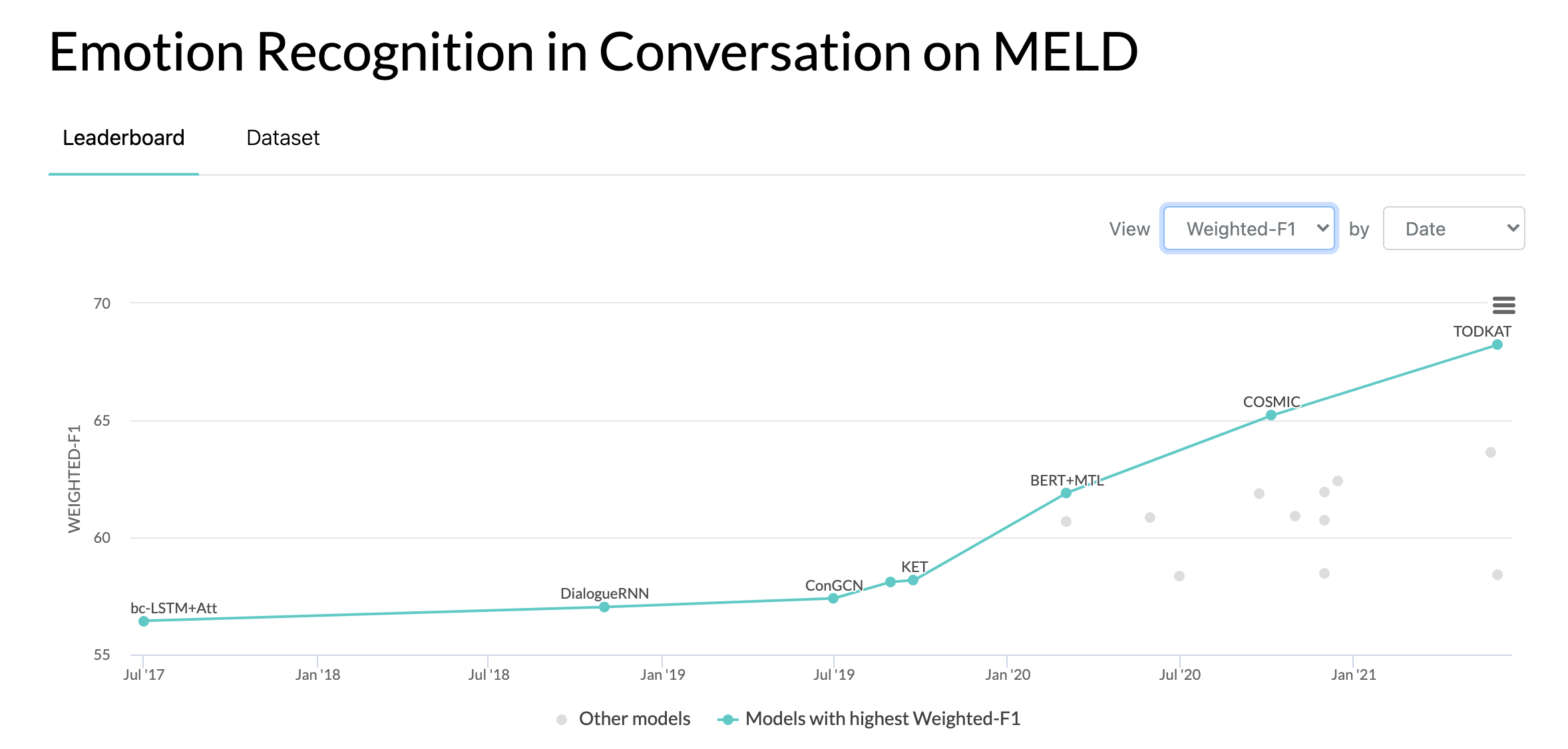

Leaderboard

Updates

10/10/2020: New paper and SOTA in Emotion Recognition in Conversations on the MELD dataset. Refer to the directory COSMIC for the code. Read the paper -- COSMIC: COmmonSense knowledge for eMotion Identification in Conversations.

22/05/2019: MELD: A Multimodal Multi-Party Dataset for Emotion Recognition in Conversation has been accepted as a full paper at ACL 2019. The updated paper can be found here - https://arxiv.org/pdf/1810.02508.pdf

22/05/2019: Dyadic MELD has been released. It can be used to test dyadic conversational models.

15/11/2018: The problem in the train.tar.gz has been fixed.

Research Works using MELD

Zhang, Yazhou, Qiuchi Li, Dawei Song, Peng Zhang, and Panpan Wang. "Quantum-Inspired Interactive Networks for Conversational Sentiment Analysis." IJCAI 2019.